(Note that information is slightly different than evidence more below.) Until the invention of computers, the Hartley was the most commonly used unit of evidence and information because it was substantially easier to compute than the other two. It is also sometimes called a Shannon after the legendary contributor to Information Theory, Claude Shannon. The final common unit is the “bit” and is computed by taking the logarithm in base 2. The next unit is “ nat” and is also sometimes called the “nit.” It can be computed simply by taking the logarithm in base e. This choice of unit arises when we take the logarithm in base 10. We have met one, which uses Hartleys/bans/dits (or decibans etc.).

There are three common unit conventions for measuring evidence. By quantifying evidence, we can make this quite literal: you add or subtract the amount! Other Unit Systems In the context of binary classification, this tells us that we can interpret the Data Science process as: collect data, then add or subtract to the evidence you already have for the hypothesis. The formula to find the evidence of an event with probability p in Hartleys is quite simple: It is also called a “dit” which is short for “decimal digit.” The Hartley has many names: Alan Turing called it a “ban” after the name of a town near Bletchley Park, where the English decoded Nazi communications during World War II. In order to convince you that evidence is interpretable, I am going to give you some numerical scales to calibrate your intuition.įirst, evidence can be measured in a number of different units.

Interpreting Evidence: Measuring in Hartleys Second, the mathematical properties should be convenient. In general, there are two considerations when using a mathematical representation. Jaynes in his post-humous 2003 magnum opus Probability Theory: The Logic of Science. For interpretation, we we will call the log-odds the evidence. My goal is convince you to adopt a third: the log-odds, or the logarithm of the odds. For example, if I tell you that “the odds that an observation is correctly classified is 2:1”, you can check that the probability of correct classification is two thirds. There is a second representation of “degree of plausibility” with which you are familiar: odds ratios.

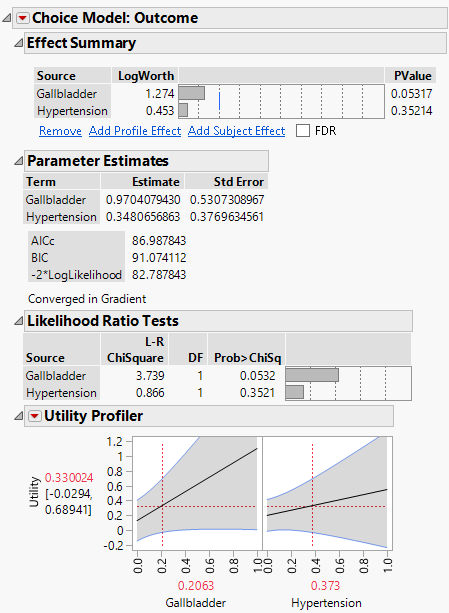

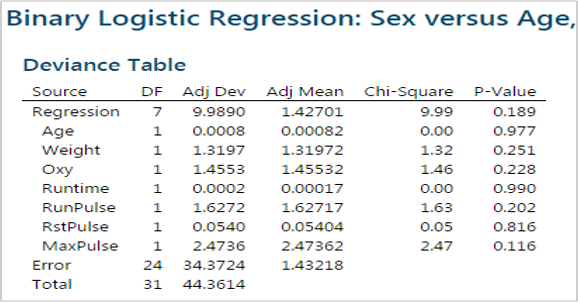

But this is just a particular mathematical representation of the “degree of plausibility.” We are used to thinking about probability as a number between 0 and 1 (or equivalently, 0 to 100%). Part 1: Two More Ways to Think about Probability Odds and Evidence This post assumes you have some experience interpreting Linear Regression coefficients and have seen Logistic Regression at least once before. Using that, we’ll talk about how to interpret Logistic Regression coefficients.įinally, we will briefly discuss multi-class Logistic Regression in this context and make the connection to Information Theory. The trick lies in changing the word “probability” to “ evidence.” In this post, we’ll understand how to quantify evidence. If you’ve fit a Logistic Regression model, you might try to say something like “if variable X goes up by 1, then the probability of the dependent variable happening goes up by ?” but the “?” is a little hard to fill in. Logistic Regression suffers from a common frustration: the coefficients are hard to interpret. Or a better way to think about probability in terms of evidence

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed